electronics-journal.com

18

'26

Written on Modified on

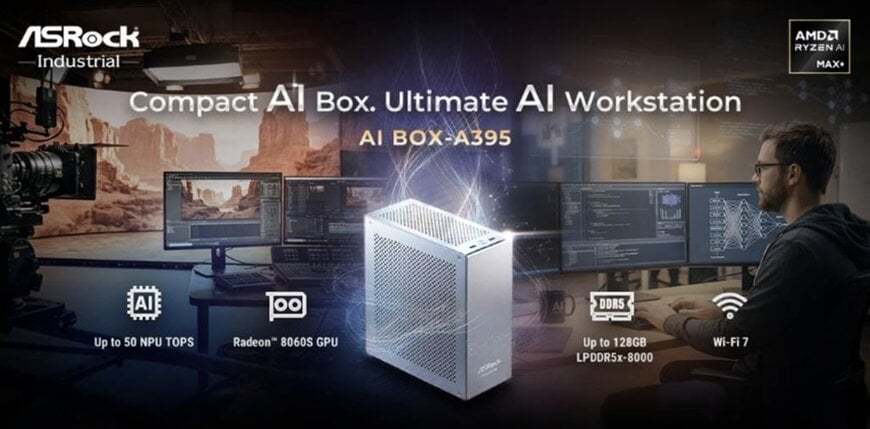

Compact Edge AI Workstation for Local Model Execution

ASRock Industrial introduces small-form-factor system combining CPU, GPU and NPU acceleration to support on-device AI inference and engineering workloads.

www.asrockind.com

Compact AI workstations are increasingly used to run inference and data processing closer to operational environments where latency and data control are critical. In this context, ASRock Industrial introduced the AI BOX-A395, a compact AI workstation designed for local AI development and edge computing deployments.

Integrated compute architecture for AI workloads

The AI BOX-A395 is based on AMD Ryzen™ AI Max+ 395 processors, combining Zen 5 CPU cores (up to 16 cores and 32 threads), an AMD Radeon™ 8060S Series GPU and an AMD XDNA™ 2 NPU within a single system architecture. The integrated NPU provides up to 50 TOPS of AI acceleration for inference workloads.

This heterogeneous compute architecture is designed to support AI model development, engineering simulation, 3D design and media production tasks that require parallel processing across CPU, GPU and AI accelerators. The platform supports both Windows and Linux operating systems for deployment flexibility.

Memory capacity for large AI models

The system supports up to 128 GB of LPDDR5x-8000 unified memory, allowing AI models and memory-intensive datasets to be processed locally without relying on discrete accelerators or external compute resources.

This configuration enables execution of large language models, computer vision models and generative AI workloads directly on the device. Local execution supports lower latency response times and can reduce dependence on cloud processing in environments where data locality is important, such as industrial AI and engineering workflows.

High-bandwidth connectivity for edge computing

To support data-intensive AI processing, the platform provides multiple high-speed interfaces, including two USB4 ports, one USB 3.2 Gen 2 Type-C port, two USB 3.2 Gen 2 ports and two USB 2.0 interfaces.

Network connectivity includes one 10 GbE and one 2.5 GbE Ethernet interface, supporting high-throughput data transfer required for edge AI processing within distributed digital infrastructure environments. The system also supports up to four displays through two HDMI 2.1 and three DisplayPort 2.1 interfaces, with support for resolutions up to 8K.

Storage expansion and wireless connectivity

Storage options include two M.2 Key M slots supporting 2242 and 2280 form factors with PCIe Gen4 x4 connectivity and RAID 0/1 configurations. This enables scalable local storage performance for AI datasets and application environments.

An additional M.2 Key E slot supports Wi-Fi 7 and Bluetooth 5.4, providing wireless connectivity options suitable for flexible deployment scenarios.

Thermal management for sustained compute loads

The AI BOX-A395 incorporates a cooling architecture using six heat pipes, a copper baseplate and optimized airflow design to manage thermal loads generated by sustained AI processing.

This thermal design supports stable operation during continuous compute workloads while maintaining low acoustic output, which is relevant for development environments and office-based AI engineering use.

Compact system design for enterprise deployment

The system is housed in a 200 × 100 × 232 mm aluminium chassis and includes a carrying handle to support portability between development or deployment locations.

Enterprise-focused features include onboard TPM 2.0 and redundant BIOS support intended to improve system reliability and security in long-term deployments. These features position the platform for use by system integrators, enterprise developers and engineering teams deploying AI capabilities within edge computing environments.

Edited by industrial journalist, Aishwarya Mambet, with AI-assistance.

www.asrockind.com